Multivariat testning jämfört med A/B-testning

Skillnaden mellan en multivariat testning och en A/B-testning

Vad är skillnaden mellan A/B-testning och multivariat testning? Låt oss ta en titt på metodiken, vanliga användningsområden, fördelar och begränsningar med dessa testmetoder.

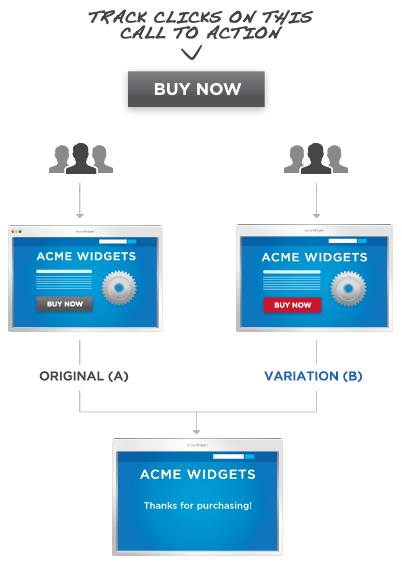

Förklaring av A/B-testning

A/B-testning, som du kanske också har hört kallas split testing, är en metod för webbplatsoptimering där konverteringsoptimeringen för två versioner av en sida - version A och version B - jämförs med varandra med hjälp av live-trafik. Webbplatsbesökarna placeras i den ena eller den andra versionen. Genom att spåra hur besökarna interagerar med den sida de visas - vilka videor de tittar på, vilka knappar de klickar på eller om de anmäler sig till ett nyhetsbrev - kan du avgöra vilken version av sidan som är mest effektiv.

Vanliga användningsområden för A/B-testning

A/B-testning är den minst komplexa metoden för att utvärdera en siddesign och är användbar i en mängd olika situationer.

Ett av de vanligaste sätten att använda A/B-testning är att testa två mycket olika designriktningar mot varandra. Till exempel kan den nuvarande versionen av ett företags hemsida ha textbaserade call to action (CTA), medan den nya versionen kan eliminera den mesta texten, men innehålla ett nytt toppfält som gör reklam för den senaste produkten. När tillräckligt många besökare har trattats till båda sidorna kan antalet klick på varje sidas version av CTA jämföras. Det är viktigt att notera att även om många designelement ändras i den här typen av A/B-testning, spåras endast designens inverkan som helhet på varje sidas affärsmål, inte enskilda element.

A/B-testning är också användbart som ett optimeringsalternativ för sidor där endast ett element är uppe till debatt. En djuraffär som kör en A/B-testning på sin webbplats kan till exempel upptäcka att 85 % fler användare är villiga att registrera sig för ett nyhetsbrev som hålls upp av en tecknad mus än för ett som kommer ut ur en boaorm. När A/B-testning används på det här sättet ingår ofta en tredje eller till och med fjärde version av sidan i testet, som ibland kallas för ett A/B/C/D-test. Detta innebär naturligtvis att webbplatstrafiken måste delas upp i tredjedelar eller fjärdedelar, med en mindre andel besökare på varje webbplats.

Fördelar med A/B-testning

A/B-testning är enkel till koncept och design, men är en kraftfull och mycket använd testmetod.

Genom att hålla antalet spårade variabler lågt kan dessa tester leverera tillförlitliga data mycket snabbt, eftersom de inte kräver en stor mängd trafik för att köras. Detta är särskilt användbart om din webbplats har ett litet antal dagliga besökare. Att dela upp trafiken i mer än tre eller fyra segment skulle göra det svårt att slutföra ett test. Faktum är att A/B-testning är så snabb och lätt att tolka att vissa stora webbplatser använder den som sin primära testmetod och kör testcykler efter varandra i stället för mer komplexa multivariat testning.

A/B-testning är också ett bra sätt att introducera konceptet optimering genom testning till ett skeptiskt team, eftersom det snabbt kan visa den kvantifierbara effekten av en enkel designförändring.

Begränsningar med A/B-testning

A/B-testning är ett mångsidigt verktyg, och när det kombineras med smart design av experiment och ett engagemang för iterativa cykler av testning och omdesign, kan det hjälpa dig att göra stora förbättringar på din webbplats. Det är dock viktigt att komma ihåg att begränsningarna med den här typen av test sammanfattas i namnet. A/B-testning används bäst för att mäta effekten av två till fyra variabler på interaktioner med sidan. Tester med fler variabler tar längre tid att köra och A/B-testning avslöjar inte någon information om interaktion mellan variabler på en enda sida.

Om du behöver information om hur många olika element interagerar med varandra är multivariat testning det optimala tillvägagångssättet.

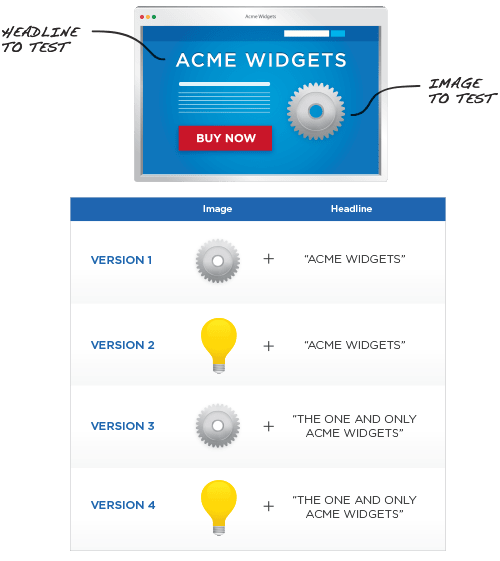

Förklaring av multivariat testning

Multivariat testning använder samma grundmekanism som A/B-testning, men jämför ett större antal variabler och avslöjar mer information om hur dessa variabler interagerar med varandra. Precis som i en A/B-testning delas trafiken till en sida upp mellan olika versioner av designen. Syftet med en multivariat testning är alltså att mäta hur effektiv varje designkombination är för att nå det slutliga målet.

När en webbplats har fått tillräckligt med trafik för att köra testet jämförs data från varje variation för att inte bara hitta den mest framgångsrika designen, utan också för att potentiellt avslöja vilka element som har störst positiv eller negativ inverkan på besökarens interaktion.

Vanliga användningsområden för multivariat testning

Det vanligaste exemplet på multivariat testning är en sida där flera element är uppe till debatt - till exempel en sida som innehåller ett registreringsformulär, någon form av fängslande rubriktext och en sidfot. För att köra ett multivariat test på den här sidan, i stället för att skapa en radikalt annorlunda design som i A/B-testning, kan du skapa två olika längder på registreringsformulär, tre olika rubriker och två sidfötter. Därefter skulle du tratta besökare till alla möjliga kombinationer av dessa element. Detta kallas också för full faktoriell testning och är en av anledningarna till att multivariat testning ofta rekommenderas endast för webbplatser som har en betydande mängd daglig trafik - ju fler variationer som behöver testas, desto längre tid tar det att få meningsfull data från testet.

Efter att testet har körts jämförs variablerna på varje sidvariant med varandra och med deras prestanda i samband med andra versioner av testet. Det som framträder är en tydlig bild av vilken sida som presterar bäst och vilka element som är mest ansvariga för denna prestanda. Till exempel kan det visa sig att en ändring av sidfoten har mycket liten effekt på sidans prestanda, medan en ändring av längden på registreringsformuläret har en enorm inverkan.

Fördelar med multivariata testningar

Multivariat testning är ett kraftfullt sätt att hjälpa dig att målgruppsinrikta omdesigninsatser till de delar av din sida där de kommer att ha störst inverkan. Detta är särskilt användbart när du utformar kampanjer för landningssidor, till exempel, eftersom data om effekten av ett visst elements design kan tillämpas på framtida kampanjer, även om elementets sammanhang har ändrats.

Begränsningar med multivariat testning

Den enskilt största begränsningen med multivariat testning är den mängd trafik som behövs för att genomföra testet. Eftersom alla experiment är helt faktoriella kan alltför många förändrade element samtidigt snabbt leda till ett mycket stort antal möjliga kombinationer som måste testas. Även en webbplats med ganska hög webbplatstrafik kan ha problem med att slutföra ett test med mer än 25 kombinationer inom rimlig tid.

När du använder multivariat testning är det också viktigt att tänka på hur de passar in i din testcykel och redesign som helhet. Även om du har information om hur ett visst element påverkar din webbplats kanske du vill göra ytterligare A/B-testningar för att utforska andra radikalt annorlunda idéer. Ibland kanske det inte heller är värt den extra tid som krävs för att köra en fullständig multivariat testning när flera väldesignade A/B-testningar kommer att göra jobbet bra.

Slutsatsen när det gäller att jämföra testformat

Låt inte skillnaderna mellan A/B-testning och multivariat testning få dig att tänka på dem som motsatser. Tänk istället på dem som två kraftfulla optimeringsmetoder som kompletterar varandra. Välj den ena eller den andra, eller använd dem båda tillsammans för att få ut mesta möjliga av din webbplats.