How to Write Automation Tests for Feature Flags with Cypress.io and Selenium

At Optimizely, we deploy a large amount of our code behind feature flags because it gives us the benefits of shortening our release cycle, perform A/B testing, and deploy code changes safer with rollouts. While feature flags (aka feature toggles) have helped us drive better products delivered more quickly, they tend to be very difficult to write automation tests against. By nature, feature flagging causes your code base to be non-deterministic, which causes major havoc when writing automation tests against it.

An analogy I like to make is that automation tests could be seen as trains, and the code could be seen as tracks. Automation tests are amazing at validating that the track is built correctly because the train can run quickly down the track and let you know if everything is in place correctly. However, when testing against a feature flag, your tracks may be changing based off the logic of your flag, and your train may derail because part of the track that it expects to be running on may no longer be available. This causes your automation tests to become flaky and give you a lot of false-positive signals.

Because of this tension between track and train or feature flag and automation test, I get asked a lot by different developers and customers the question: “How do you create automation tests against feature flags?”

Here at Optimizely, our development teams use a variety of different strategies to aid our automation framework in ensuring that the code it is testing is deterministic. We would like to share with you some of these today.

Audience Conditions

Optimizely Full Stack allows you to create a specific audience condition that your test runner passes in that ensures the test runner will either always be bucketed in the feature or never bucketed in the feature.

A quick note about the example below. At Optimizely, the Automation Team uses Cypress.io and Selenium to run our End to End Test and Regression Automation Test. This specific example was written in Cypress.io. We really like Cypress.io because it is fast and easy to develop in, has screenshots and recordings, and offers robust reporting. You can find our simple example app and our Cypress.io automation tests code located here.

In our example app below, we have added an Optimizely feature flag around the astronaut image. When the flag is ON we will see the astronaut (Feature On), and when the flag is OFF we won’t (Feature Off).

We want Cypress.io to assert for both conditions (Flag On/Flag OFF) and validate that the picture is correctly being displayed or removed.

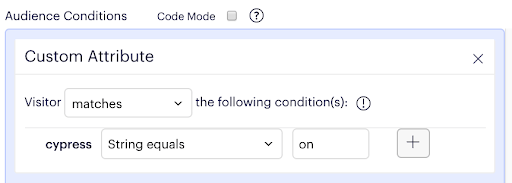

In your Optimizely feature flag, we create an audience condition called cypress. We want to trigger this condition so we set a match for any string that equals to “on”.

In the Cypress.io test runner, we pass the query parameter cypress=on and validate that the new feature is on.

it('Validate that the astro_boy feature is enabled', function () {

// Visit app with audience query parameter

cy.visit('/?cypress=on')

// Validate feature is enabled

cy.get('#astronaut')

.should('exist')

})This allows our Cypress.io test runner to know exactly what to test for and assert on that.

As you can see below, the Cypress.io test runner is executing our actual test cases in our app, toggling between features on and off, and asserting the image is being shown/not shown.

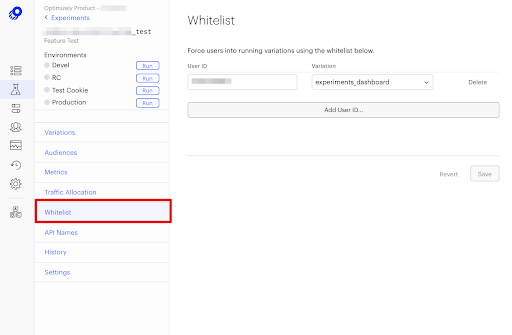

Whitelist Functionality

Optimizely also gives you the capacity to whitelist your test runner for a feature test (a feature test is an experiment you can run on a specific feature flag). Pass in a User ID that your test runner will use to ensure that Cypress.io is always viewing the variation that you want it to assert against. Note: this functionality is only available for Feature Test and not for Rollouts. You can see how to do this in Optimizely in the screenshot below.

Set Forced Variation

Optimizely also utilizes Selenium test being run on a BDD framework. For these tests we utilize the ability to set a forced variation directly in the SDK. The following example is a Selenium implementation that forces one variation and then validating that feature path (Note: Forced Variation only works with Feature Test and not Feature Rollouts).

Below we have a sample Gherkin where we want our Selenium test runner to ensure that the variation we are testing is always the create_flow variation (the variation we want to test) and not randomly being assigned into any other variations.

Scenario: Create an AB Campaign with url targeting via simplified creation flow Given I open a browser as persona "V2User" Then I force bucket user "$acc_id" into "create_flow" of experiment "exp1" When I visit "/v2/projects" Then I should see "Experiments"

Our actual Python code calls to the SDK, passes in the key of the experiment, the ID form the test runner, and which variation we want to test.

assert fullstack.set_forced_variation(

experiment_key, user_id, variation, datafile_key

), 'Failed to clear set_forced_variation'

# Double-check

assert fullstack.get_variation(experiment_key, user_id, datafile_key) is None, 'Failed to clear set forced variation'These are just some strategies our automation team at Optimizely approaches our Feature Test and Feature Rollouts to ensure we are testing new code deterministically.

Do you have an awesome strategy not seen here? Or do you have any questions about what we are doing? We would love to hear more from you! Contact us at quality@optimizely.com

If you’re interested in getting started with feature flag management and controlled rollouts, check out our free flagging product: Optimizely Rollouts.

- Last modified: 4/28/2025 2:49:57 PM