Feature flags vs blue-green deployments: Less risk and more control

A quick primer: blue-green deployments involve redirecting user traffic to a different set of servers that host your updated application. Whereas feature flags are code-based and enable users to see a new update by making changes at the application level.

This blog post will provide an overview of how feature flags solve similar challenges as blue-green deployments but with more control and while requiring less engineering time. Let’s take a look:

A blunt tool: Blue-green deployments

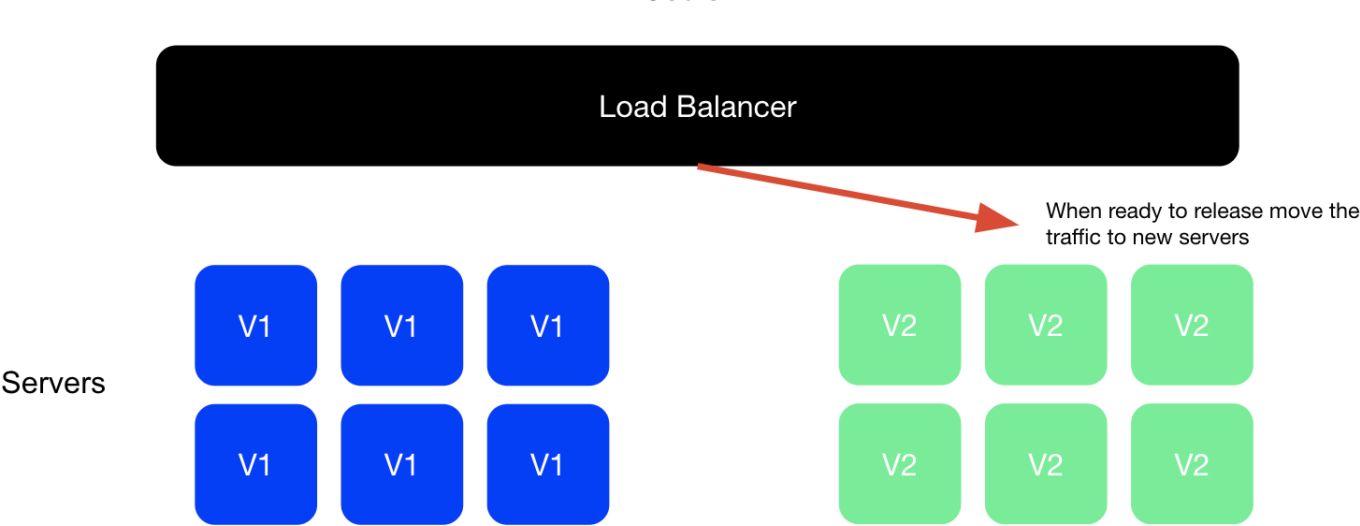

In a blue-green deployment, you designate a portion of your servers to host a new version of your application and another portion of your servers to host the previous version of your application. In the diagram below, the blue group of servers has your current version, while the green group hosts your new version. To expose users to the update, you have to coordinate with the operations team to shift traffic via the load balancer (also known as the traffic router).

If you’re doing a release that updates multiple features and you discover a bug in one of them, you can easily revert back to the previous version by shifting traffic back to the previous version or “blue group” of servers. However, blue-green deployments are a blunt solution in this way because they don’t deliver feature-level control. You have to roll back the entire update to shut off just one feature.

Pros and cons of blue-green deployments

- Pro: Easily reverts a release by rolling back traffic to a blue environment

- Pro: Doesn’t require additional code added to your application

- Con: Rolls back the entire release instead of just the buggy feature

- Con: Doesn’t enable feature-level granularity, just release-level changes

- Con: Splits traffic at the server level and difficult to grant or limit access to specific users

- Con: Often requires developers and operations to coordinate access to infrastructure

- Con: Can be slower to switch over as load balancers require all connections to drain

A precise solution: Feature-flag deployments

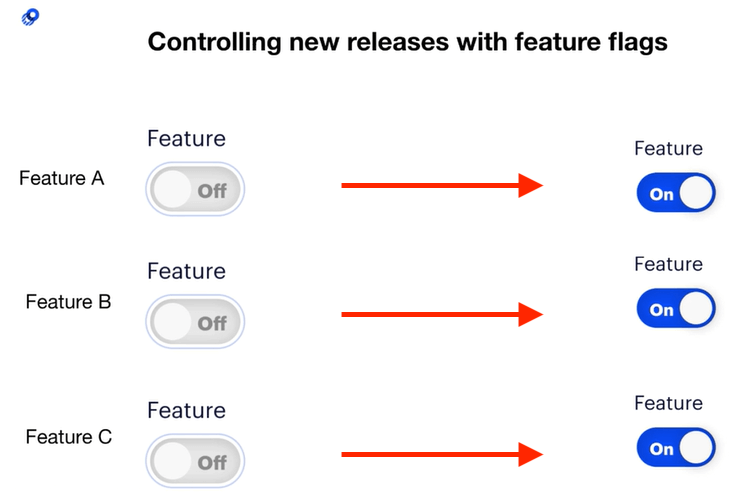

With feature flags (also known as feature toggles), each feature in your software update has its own flag, or toggle, that can be independently controlled from the full release. With a feature flag service such as Optimizely Rollouts, you can create the flags within the feature flag platform’s UI, then wrap new features or code paths in the feature flag code.

This time, if you notice a bug in one of your features, you don’t have to roll back the entire release. You can simply toggle off the glitchy feature by turning off the feature in your feature management dashboard without a code deployment. In this way, feature flags provide more control, are more responsive, and help save engineering time vs. blue-green deployments, which require cross-team collaboration and maintaining multiple sets versions across your servers.

Pros and cons of feature-flag deployments

- Pro: Easily switches off a single buggy feature

- Pro: Enables feature-level granularity

- Pro: Can grant access to features easily to individual users, specific audiences, or percentages of your users

- Pro: Software engineers can make changes without involving IT/DevOps

- Con: Requires additional code added into your application and can create technical debt if you don’t have a good practice for removing existing flags

Rolling out an update to only a subset of users

The power of using feature flags to release features becomes even more apparent when thinking about canary releases or releases features to a minimal subset of your user base before rolling out to everyone. When you’re rolling out a new release with feature updates, you’ll likely want to test drive the changes with a small group to make sure everything works correctly. In software development, this is referred to as canary testing/deployments or staged/phased rollouts.

Canary tests allow new code or features to be released to a small subset of users to verify any issues with the code before releasing it to a larger audience. By limiting the release to a select audience, you can minimize the blast radius of new releases, and teams can validate functionality and performance before rolling out to all users. This approach also helps detect issues that only became visible when moved from development or staging environments to production.

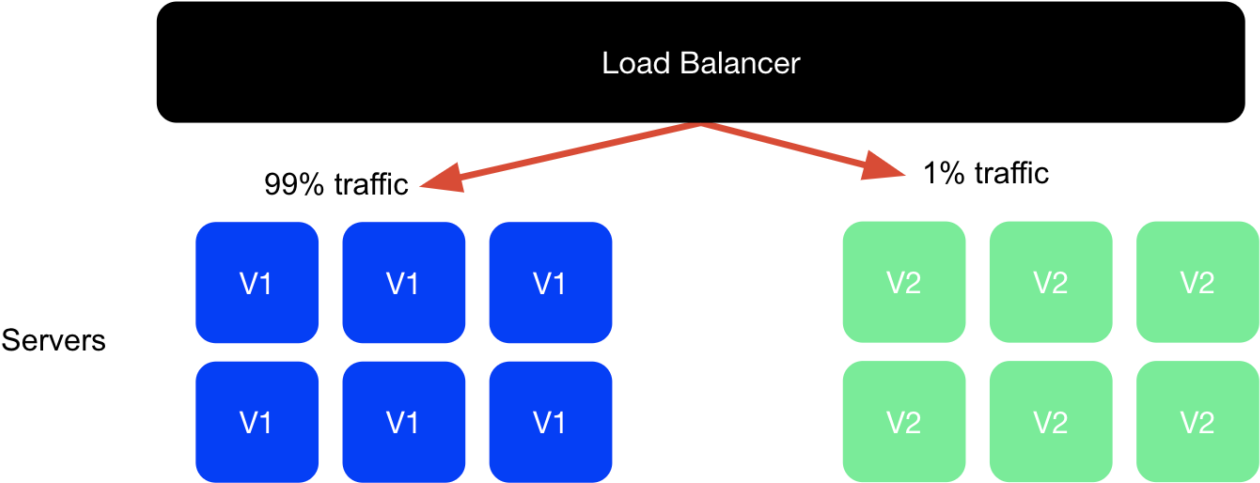

It’s possible to perform canary tests through both blue-green deployments and feature flagging, though the same challenges exist with directing traffic at the server level.

Canary tests through blue-green deployments: Instead of directing all your traffic from the blue group of servers that hosts the current version of your application to the green group of servers, you can move just a percentage to the new version. However, you still don’t have control at the feature level and need to roll back the entire release if one feature is broken. Also, if you want more control over which customers receive access to a feature, it is complicated to define rules at the server level.

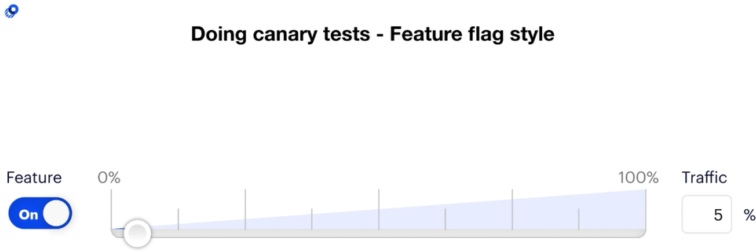

Canary tests through feature-flag deployments: With feature flags, you can perform a canary test with each feature that has an on/off switch by selecting the portion of traffic you’d like to expose to a given feature. If a particular feature has a bug, you can simply turn it off instead of reverting back to the previous release. You can also use audience targeting to enable features for specific customers or segments of customers (e.g., just customers on the free tier).

Recap

- Feature flags provide a more granular, easy-to-use solution to reduce risk when releasing new features

- Feature flags empower software development teams to manage their releases and reduce the need for operations support

- Feature flags can be used to run canary tests at the user level, providing more control than at the server level

If you’re looking to get started with feature flags, check out our free solution: Optimizely Rollouts.

- Last modified: 12/30/2025 8:09:17 PM