“The best ideas emerge when very different perspectives are met.” - Frans Johansson

Is your experiment program stuck in a rut? Are the test ideas on your roadmap sparse or very one-sided? Are you in need of a little more creativity and inspiration to boost the value of the program? If yes, these are all key indicators that you may need to facilitate an experiment ideation session.

So how can virtual experiment ideation sessions help? They dedicate a specific time and virtual space to:

- Be hyper-focused on your users or visitors

- Gather unique perspectives from different people

- Encourage creativity and engagement as a team

Altogether, they allow you to generate innovative new ideas that wouldn’t have otherwise been possible if done individually in silos. In this article, I will show you in the two parts how I’ve run productive virtual ideation sessions with Optimizely’s customers by addressing (a) the tools and setup needed and (b) a step-by-step facilitator guide.

Setting it Up

There are plenty of software options available to virtually re-create the in-person whiteboard to which your team may have been accustomed. Options range from simple (using a Google Slide) to more robust and purpose-built platforms like Mural and Miro.

To get started, you’ll need to prepare your ideation session by selecting and setting up some basic tools:

- Virtual whiteboard - It helps with usability to have an expansive virtual space that allows for panning/zooming and easy navigation helps. This is where a blank PowerPoint/presentation slide may not cut it.

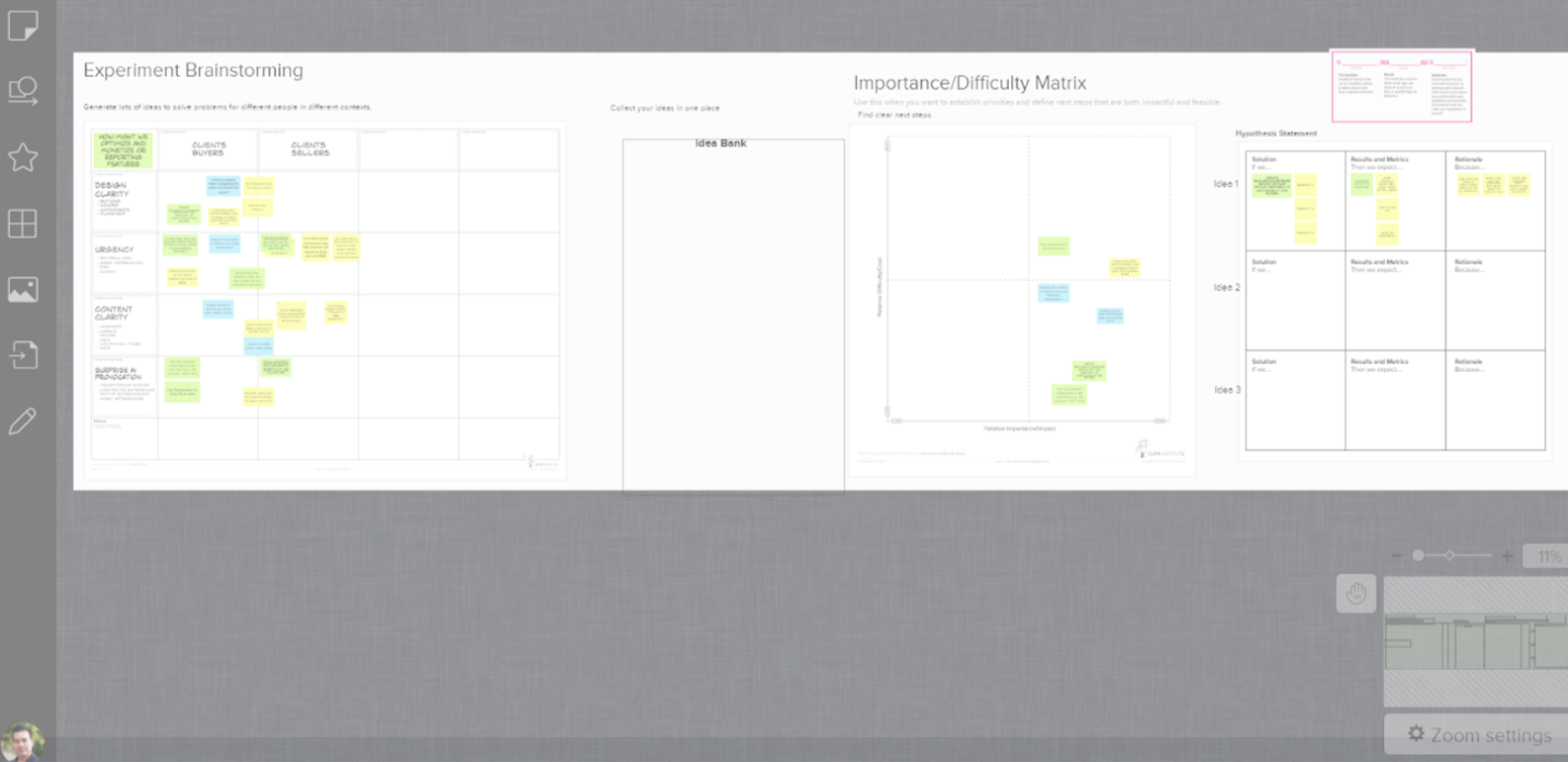

A highly usable and expansive virtual whiteboard in Mural.

- Ideation framework - A framework of your choosing to help organize the ideas, structure the conversation and conducive to eliciting new ideas. You may have your own framework depending on the ideas you're trying to elicit or the problem you’re trying to solve. Some popular frameworks that we use at Optimizely for our customers are:

- Problem Solution Result - A universal approach to generating experiment ideas that’s rooted in first identifying and qualifying a user problem. All too often I see experiment ideas rooted in ‘hunches’ and not necessarily data. With a problem statement identified, participants are then asked to brainstorm all the many solutions that are possible and then asked to rank them in order of impact (e.g., ability to solve the problem). These elements can then be encapsulated on your whiteboard as an Experimentation Canvas that you can collaboratively complete. This is generally a very universal approach that can be applied to all aspects of your users’ or visitor’s experiences. For further details, see my colleague’s post on how to run a hypothesis workshop rooted in this framework.

- Creative Matrix - This approach values divergent thinking by encouraging quantity of ideas over the quality of ideas in an exploratory fashion. A business problem constructed as a “how might we...?” question is the center point (e.g., “How might we encourage visitors to explore our product/pricing plans?”). Rows and columns are then drawn in a grid with each column designating a customer segment (could be New Visitors, Return Visitors, etc.) or even Persona (Student, Small Business Owner, Enterprise). Each of the rows in the grid designate a particular technology, enabling solution or value proposition (more on how this comes into play in the Facilitators guide below).

- Virtual sticky notes/cards - If using a shared whiteboard space, having cards color-coded uniquely to each participant is helpful for traceability and facilitating the discussion.

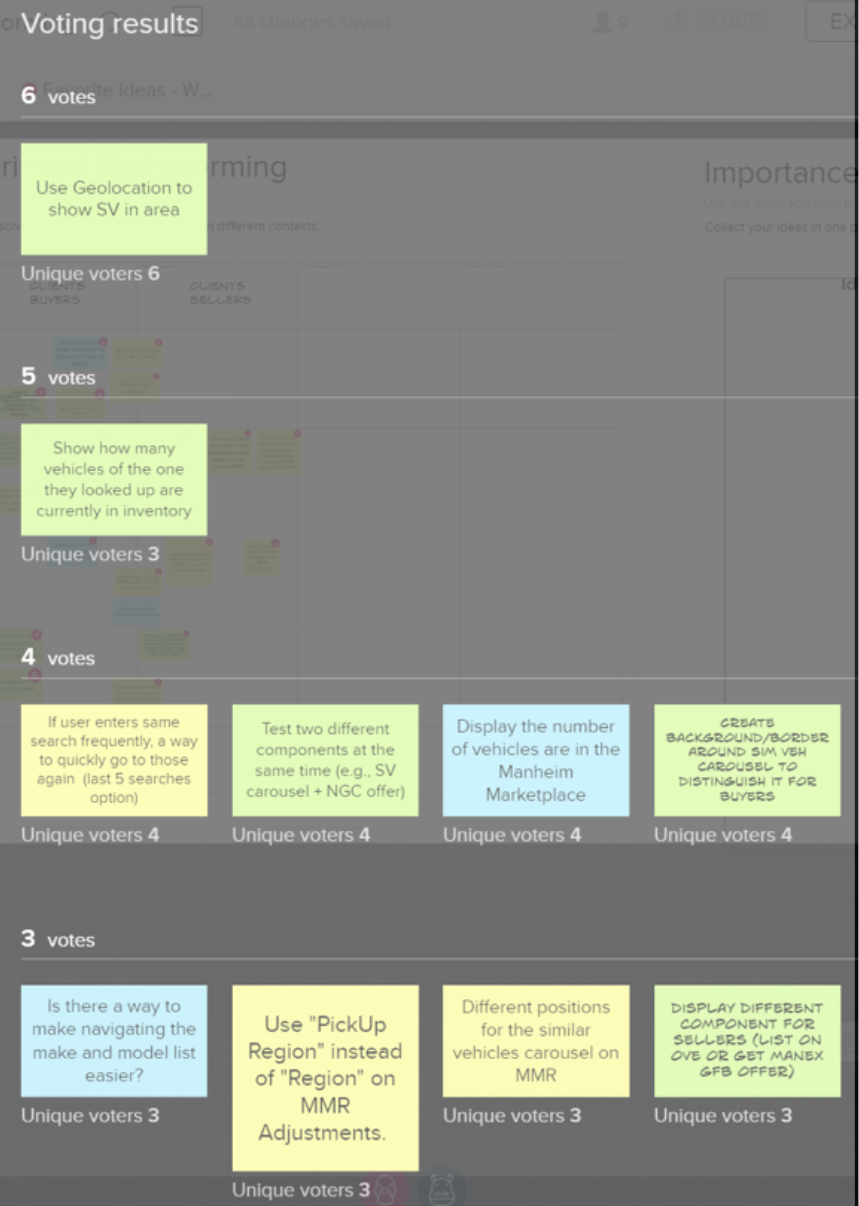

- Group Voting - There will be a time to vote on ideas (we’ll get into the process). I like the way Mural demonstrates where you can assign a participant any number of votes to allocate the ideas they like the best. Alternatively, if using a more general whiteboard space (e.g., Google Slides), you could represent votes with dots and manually tally them.

MURAL has features to vote on ideas and display the results directly on the cards.

In lieu of a robust collaboration platform, you can have dots or shapes represent votes in a Google Slide.

- Timer - We should be time-boxing the whiteboard session. No need to be fancy but something that everyone can see is useful like the visual countdown timer in Google's search engine results.

- Exportable - The ideas will need to be executed, so the output of our session needs to be exported in a format that is shareable and retained for future reference (e.g., PDF, image file, the virtual whiteboard itself, etc.) It also helps if external stakeholders can gain access back into the whiteboard without requiring the additional friction of signing-in or registering.

(Tip: Many whiteboard platforms can create share-links to send to external stakeholders without requiring individual logins. This is helpful for generating excitement around the workshop and leads to follow-up sessions, which is a benefit to a virtual session!)

Running the Virtual Session: Step-by-Step Facilitator Guide

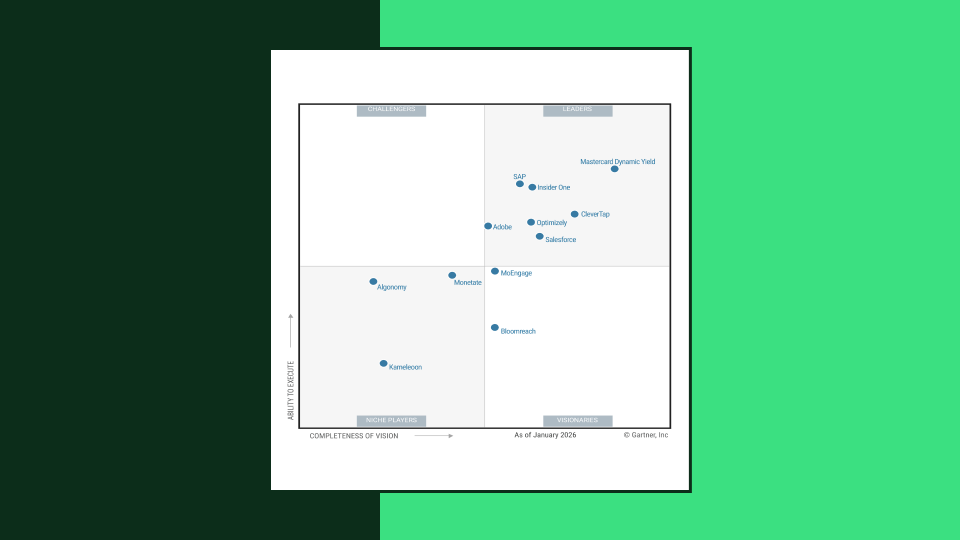

Now that we have all the virtual tooling in place, let’s work through how to facilitate the virtual workshop. At Optimizely, we title our ideation workshops as hypothesis workshops because the end goal is to have a collection of hypotheses that can feed into a testing roadmap. So, now that we understand the output, let’s get to the step-by-step process to ensure you can facilitate a productive hypothesis workshop of your own starting with the agenda.

A good starting point for an agenda is:

- Introduction and Defining the Problem Statement - 5 mins

- Individual Brainstorming - 5 mins per problem (resist the urge to extend this too long - limiting this to a shorter period allows for the top ideas to surface and participants aren’t trying to “reach” for obscure ideas)

- Idea Read Out - 10 mins (highly dependent on the number of participants, recommended to limit it to five to make it easier to facilitate and limit the number of ideas to discuss)

- Group Voting - 5 mins

- Prioritization Activity - 10 mins

- Framing the Hypothesis - 10 mins

Let’s break down how we prepare for and facilitate each one of these activities:

Prework and Problem Definition

There may be some pre-work needed to have individuals added to whichever software platform you’re using to host the virtual whiteboard. Sometimes, a light introduction is needed, and many software vendors have self-guided user onboarding, so I recommend that participants log on beforehand and familiarize themselves with the experience.

In the meeting invite, be sure to define the identified problem statement for which we’re developing hypotheses/solutions. This should be a user problem that’s specific enough, tied to a business KPI, and not a solution in itself. See these examples on formulating the perfect user problem.

It may take a few prep meetings with key stakeholders to align the top user problems to focus on. I’ve even seen entire workshops dedicated to just problem definition (yes, it’s that important to know what you’re solving for!).

If using a Creative Canvas link, it’s helpful to have categories for each of the ideas/solutions (Design Clarity, Content Clarity, Urgency, etc.). And it’s also another column if there are multiple user groups or stakeholders that are impacted by the problem.

Introduction

Start with introducing the purpose of this session. I like the format of answering the “why” (why is this important?, why now?), then the “how” (how will this be facilitated?, how will we elicit ideas?) and then move to the “what” (what is the problem statement?).

Also, use this time to address any FUDs (fear, uncertainty, doubt) that participants have. This is the point to allay any concerns with the process. Common doubts I hear from teams are “how will I know that my idea will be taken seriously and not ignored?” or “I have ideas that require a lot of change and effort, should I voice these, too?”

Generally, I like to employ the “creative matrix” framework to guide the brainstorming process.

Individual Brainstorming

Set a timer and time-box the brainstorming activity (5 or 10 mins). This part of the activity is individual and gives participants a chance to add their own ideas to the “board” without the influence of the group to deter what one thinks is a good idea.

Remind participants to not worry if their ideas overlap with someone else’s and don’t worry about the granularity of the idea yet - we’ll refine the specificity of the idea later. The idea should be framed as having two components: the proposed solution and a rationale (either qualitative or quantitative) that indicates why this solution would solve the problem.

Share and discuss the ideas

One by one, read out the ideas and encourage discussion. Have the idea owner field clarify questions and support evidence why this idea would work. Decide if the idea needs to be elaborated in greater detail or broken into more granular ideas. My rule-of-thumb: if the solution can be implemented by a “pizza-sized team” (a team that can be fed by a single pizza), then it's the right size.

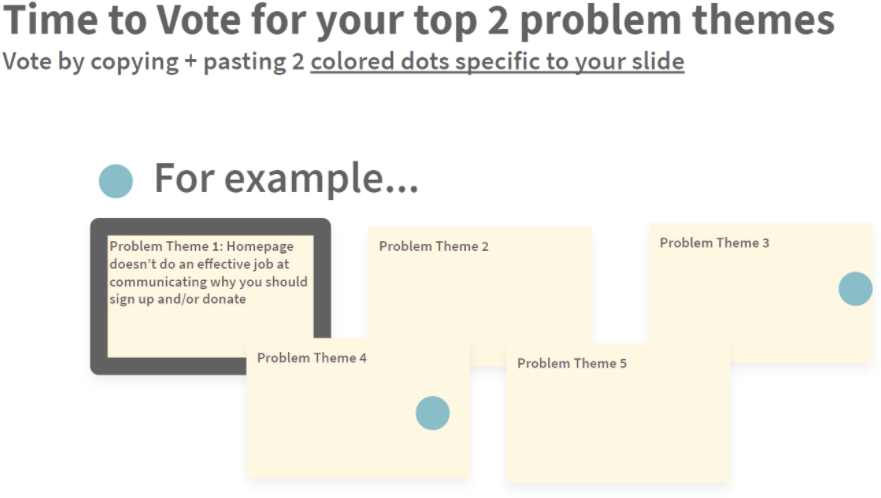

Group Voting

Give the participants a set number of votes that they can use to indicate which idea would have the most potential at solving the stated problem. This is by no means a replacement for a comprehensive scoring rubric, which can come later when these ideas get added to the larger roadmap (our partners at WiderFunnel use PIE). You can also predetermine how many votes a participant has available to cast their vote and if they’re allowed to vote multiple times.

After tabulating the votes, the distribution of votes may require the facilitator to be the tiebreaker.

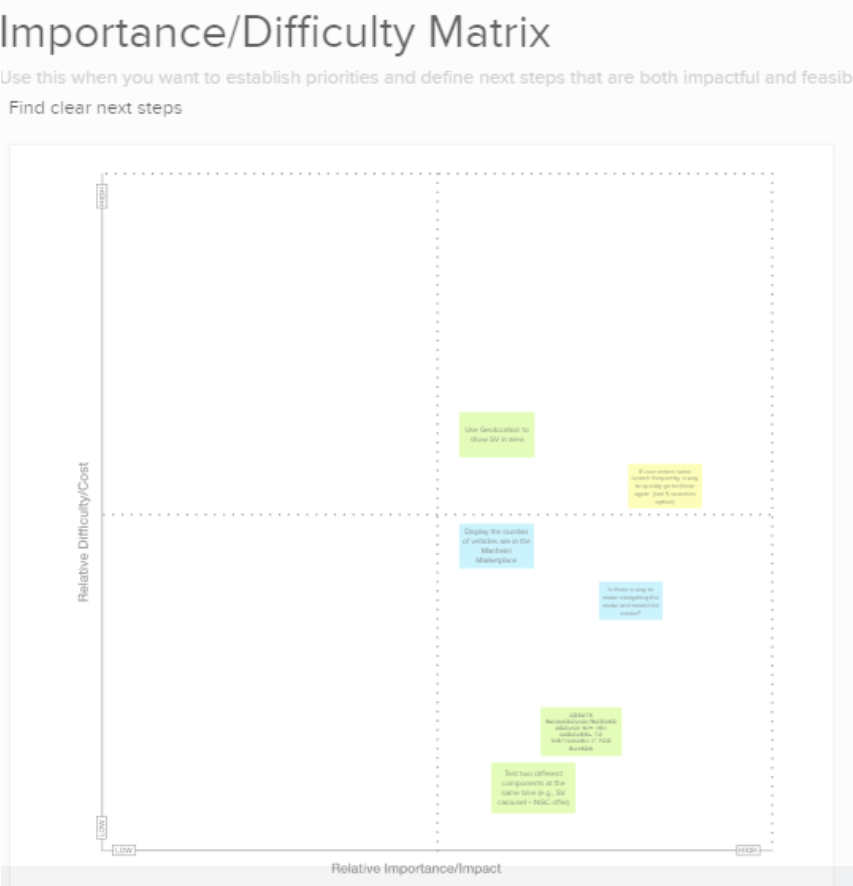

Effort vs. Impact Prioritization

This step encourages participants to consider the effort of implementing the idea versus the impact that it may have at solving the problem. A way to frame this is to plot the cards on a matrix with low to high effort going up a vertical axis and low to high impact going left to right on the horizontal axis.

As a group, take the top ideas (e.g., top 5) and ask how they would order the ideas on the bottom axis in ranking of Impact only (which one has the highest likelihood to solve the problem?). Then in another round, do the same for relative Effort. Asking the group to rank the ideas relative to one another is beneficial in that it avoids having to define a specific scoring rubric that can often lead to nitpicky discussions on what qualifies as a “5” versus a “4”. It’s easy for people to make relative comparisons rather than assigning absolute scores.

As a group, it’s helpful to plot your ideas on this Effort vs. Impact matrix to see relative importance.

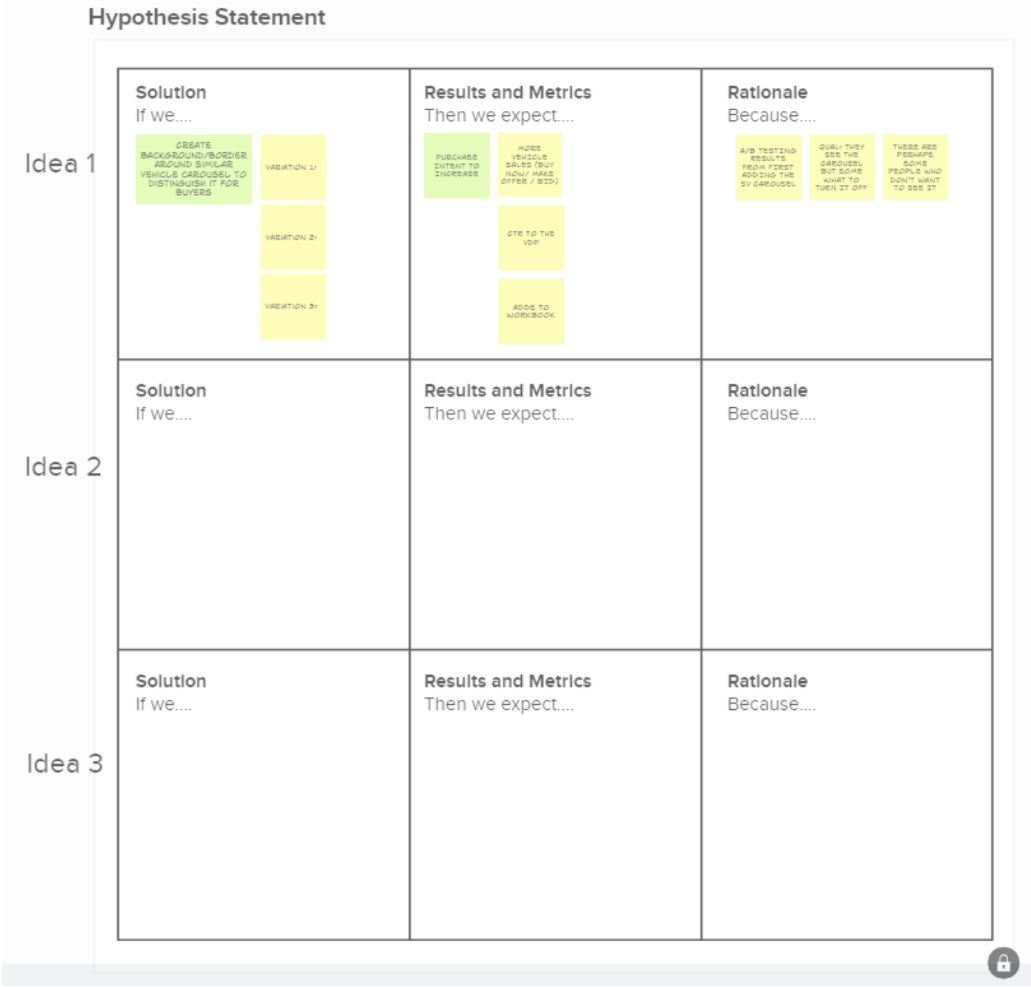

Framing the Hypothesis

After the first attempt at idea prioritization, take the ideas that are in the top right quadrant for high-impact and low-effort ideas. These are the ideas that as a group, we can begin building a hypothesis around. Just like any good hypothesis, it should be framed in a simple statement that includes the solution, the result(s), and the rationale. For example: If we do “X”, then we expect “Y”, because of “Z”. Stated this way, “X” naturally becomes your stated solution, “Y” is your metrics, and “Z” is your rationale.

The hypothesis could be focused on achieving quantitative results (e.g., uplift in conversions) or its purpose can be to gather some qualitative learning (e.g., a painted door test).  The final output of the ideation session are the top ideas summarized in a hypothesis. Armed with this, it’s a minor step of entering these details into a test plan.

The final output of the ideation session are the top ideas summarized in a hypothesis. Armed with this, it’s a minor step of entering these details into a test plan.

Now that we have a fully formed hypotheses, we’ve reached the goal of the ideation session. Depending on your existing process, these new ideas can either be added to a larger roadmap where they’re evaluated holistically (perhaps with a more detailed scoring rubric) or green-lighted as they stand.

The next step would be to follow your existing experimentation process, which generally would be detailing the idea in more granularity into a test plan to understand the experiment configuration (e.g., on which pages would this test run? How will we configure the stated metrics? Will they be measured as click events, pageview events or custom events?, etc.).

Now that you’ve accomplished one successful workshop, let’s not stop there. You’re now armed to repeat this with additional functional teams to drive high-impact testing in your company.

If you are interested in getting started with progressive delivery, you can sign up for a free Optimizely Rollouts account.

- Last modified: 12/30/2025 8:17:47 PM