For Killer Response Rates in Large Campaigns, Try Experimental Design

Your customer base is dynamic and complex. If you’re trying to optimize across your entire population of users, traditional A/B experiments may fall short.

This is a guest post from John Senior, a partner at Bain & Company. Bain is a management consulting firm based in New York. John Senior leads Bain’s Customer Insights & Segmentation work.

Your customer base is dynamic and complex. If you’re trying to optimize across your entire population of users, traditional A/B experiments may fall short. In this case, you should consider a technique we call experimental design because it allows for massive increase in the amount of variance in your digital marketing campaigns.

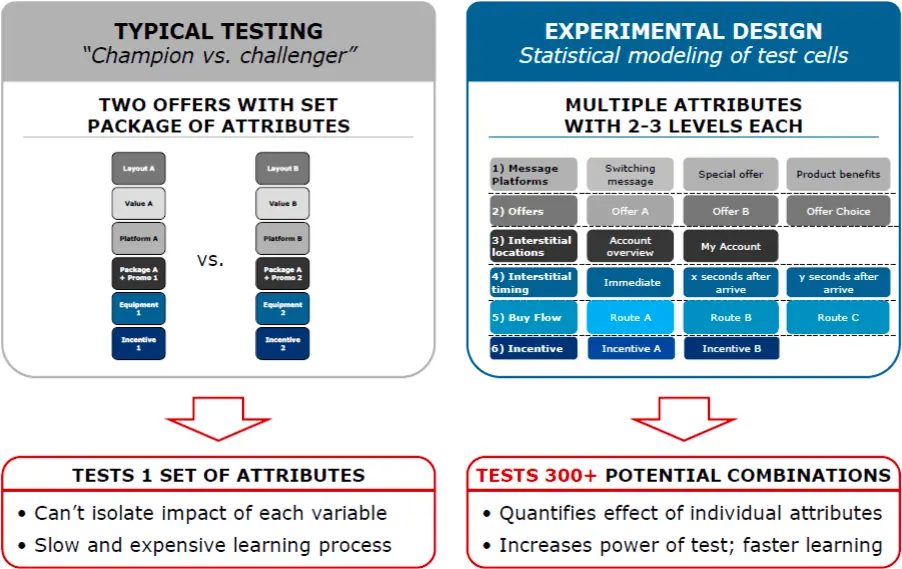

Experimental design is a form of multivariate testing. This technique allows marketers to massively increase the possible combinations of individual variables in digital marketing campaigns as opposed to binary changes in A/B tests. Marketers can test and project the impact of many variables (such as web page formats and designs, product offers, messages, incentives, interstitials, and so on) by testing just a few combinations of them.

We have seen multivariate experiments increase response rates to promotional offers for products, subscriptions, and other marketing campaigns by 3 to 5 times, adding hundreds of millions of dollars of value.

In this post, we’ll discuss how to make large-scale experimental design campaigns actionable.

Complex customer bases, tough nuts to crack

Multivariate testing or experimental design works best with companies that deal directly with a high volume of customers, such as telecommunications and cable firms, banks, online retailers and credit card providers. It can be more complex to run than A/B testing, and it requires a solid understanding of each customer segment’s demographics and behavior, and how to target that segment. You’ll probably have to orchestrate a number of external vendors, and certainly will need to get departments like finance and IT on board early on. But experimental design is well suited to the big, tough marquee issues you’re trying to address.

We recently worked with a communications service provider to create experimentally designed digital marketing campaigns to improve cross-selling to existing customers. We tested 6 variables mapping to 12 test cells, including offers, messages and the timing and location of interstitial pages. At the end of the campaign, we modeled response rates for every possible combination of variables—over 300 combinations in all, depicted below. The best combinations achieved 2 to 3 times the response rate of the existing champion offer, depending on the segment of consumer, and the marketing organization learned exactly which variables cause people to respond.

Surprises worth a lot of money

The campaign uncovered unexpected results. For example, we had expected that certain types of gift cards would spur higher response rates. In fact, those incentives didn’t move the needle at all. We also found that the presentation of the offers themselves caused different response rates for different segments. Other high-performing attributes included two calls to action that were soft sell rather than hard sell.

When experimenting with messages, we found it helpful to apply research in behavioral economics. For instance:

- The teaser message “don’t miss out” derives some of its power from the phenomenon of loss aversion, referring to people’s tendency to strongly prefer avoiding losses (what they would miss) to acquiring gains. Read more on framing messages for increased conversion.

- People are more likely to choose an option in the middle, a phenomenon known as the decoy effect. So the “better” option in a good/better/best presentation got more than its fair share of customers and could be priced accordingly.

From concept to launch, this multivariate test took three months and is running alongside other tests. With the test running on digital channels, we could track results within a few days. We can also closely track fatigue — the tendency for response rates to particular offers to diminish over time — and use the real-time feedback to refresh the messages and incentives to drive more lift in response.

Is your organization ready?

Experimental design alone won’t make web design and digital marketing more effective. In order to put the results to work, the organization also has to adapt in a few ways:

Commit to taking action. Besides lining up all the functional departments early on, it’s crucial to make sure the business units themselves are ready and committed to using the insights. Business unit heads may be reluctant to change current marketing campaigns because they’re worried about downside risk. You’ll want to prove that the sample size is large enough, that the variables will apply on a large scale, and that the financial model for measuring the testing program ROI is robust.

Define each customer segment. Besides the obvious need for expertise in statistical modeling, successful experimentally designed marketing tests depend on developing other capabilities, like the ability to draw up high-definition portraits of the customer segments based on actual customer characteristics held in company databases, not just on research.

We found that we could draw insights about a customer from analyzing his or her route through the website before a purchase—actions like paying the bill, streaming content, tinkering with account settings or other behavior.

Another key capability involves targeting—the ability to automatically target customers at different parts of a website path or conversion funnel based on their usage patterns.

Investment in training. Launching multivariate tests efficiently and making sure the resulting insights get used in subsequent campaigns usually requires new internal processes and training. Salespeople and call-center agents may need new scripts to help them manage the customer calls in response to different offers, or to effectively up-sell customers to the highest-value products in order to close the loop of the experiment.

Decision making. Based on the financial modeling, companies should put in place financial thresholds, such as profitability targets, that serve as guardrails for subsequent campaigns when testing variables such as promotions and incentives and their effects on customer response rates. These thresholds help speed up decision-making and create a repeatable, efficient test-and-learn model.

With the rapid spread of mobile devices and social networks, marketers have more communication alternatives than ever before. That makes for greater opportunities—if you can uncover which attributes of the campaign actually influence customer behavior. By harnessing the power of massive variance, experimental design matches exactly the right offer with the right customer.