Why siloed optimization goals limit success

If there was a glass ceiling for experimentation, it would be scalability and measurement. One of the key challenges for experimenters lies in developing a strategy that will enable them to scale their optimization program and create the right metrics to effectively measure its success.

The most innovative of organizations have multiple teams testing, launching their own ideas, and developing metrics to track their successes. However, when you’re scaling experimentation across teams, there is an opportunity to run into other impacting metrics. There’s a likelihood that not every team shares the same goals so there’s also a need to put in a strategy that will prevent other goals being impacted.

Today, I’m Director of Product at Optimizely but I want to walk you through a real-life example from my time at Microsoft that exemplifies why optimizing goals in silos often limits success, and share some best practices I’ve learned along the way.

Learn from Bing’s performance team

I joined Microsoft 8 years ago as a newly-minted program manager for the Bing site performance team. Back then, our search results page was perceivably slower to load than the competition’s. We ran experiments that added artificial delay to the page, which proved that slower page load times (PLT) hurt user engagement and revenue (amounting to millions of dollars lost for every 100 milliseconds or 1/10th of a second added to the page).

“An engineer that improves server performance by 10ms (that’s 1/30 of the speed that our eyes blink) more than pays for his (or her) fully-loaded annual costs. Every millisecond counts”. – Online Controlled Experiments at Scale, Kohavi, Deng, Frasca, Walker, Xu, Pohlmann, 2013

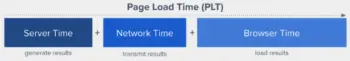

Typical factors that influence web page load time

Our leadership also said publicly that in the world of search engines:

“two hundred fifty milliseconds, either slower or faster, is close to the magic number for competitive advantage”. — for impatient web users, an eye blink is just too long to wait, New York Times, 2012

Consequently, our team was tasked with the urgent mission to be within the blink of an eye to competition (that’s 250 milliseconds give or take). We assembled a formidable group of performance engineers and got to work.

Every millisecond matters… but not for all teams

A few months in, we had shipped a lot of improvements validated by A/B tests. Yet, things were going south (or, to be precise, north, given we were dealing with page load times). Our overall PLT metric continued to move in the wrong direction.

The harsh reality — even after several successful experiments, we moved farther away from our target.

We soon realized why. While we were preoccupied with our work, other teams shipped features that often impacted PLT.

For example, our results relevance team was laser-focused on optimizing clicks on web results. This usually meant running more time intensive ranking models on the server. Similarly, our Multimedia team favored shipping features that added visual richness to the page, which in turn improved engagement on the image and video results. But each of these improvements added more weight to the page, making it slower to load. And the list goes on. Over time, these changes collectively added a considerable penalty to PLT.

We needed a better plan. We turned to A/B testing again, but this time with a defensive mindset.

Fortifying our defenses

Product teams at Bing have an experiment-first philosophy for every big or small feature they want to ship. Conveniently, this allowed us to track features that impacted PLT while they were being A/B tested. We started executing a new strategy that entailed several preventive measures.

1) Experimentation served as a risk mitigation mechanism at scale

Every A/B results page included PLT as a guardrail metric. Teams could not ship a feature that hurt PLT without sign-off from the performance team. Experiment alerts also warned us about A/B tests that impacted PLT and allowed us to react with proper corrective action.

2) Performance budgets constrained the impact from other teams

We introduced performance budgets per team. For example, our Multimedia team was allowed to add up to +X milliseconds to the page per release cycle (roughly 6 months in duration). They had to get executive-level approval to go beyond that, or find a way to cut milliseconds elsewhere.

3) Experts and dedicated tools assisted with resolving issues

We assigned experts to help teams mitigate the performance impact of their features. The same folks built new investigation tools that allowed us to pinpoint the culprit code that increased PLT in the confines of an A/B test.

4) Executive Reports created urgency and brought accountability across the organization

Finally, we began sending regular organizational-wide reports that highlighted our progress and often acknowledged the efforts of other teams. The reports reinforced the sense of urgency around performance and promoted cross-team accountability towards meeting our goals.

A hypothetical performance executive report highlighting current progress and team budgets

Ultimately, this defensive strategy proved to be a winning recipe. We exceeded our targets, and this would not have been remotely true had we focused only on shaving milliseconds off the page and not also on preventing teams from impacting PLT.

Takeaways

I focused on performance in this blog post, but the lessons apply to any optimization goal that is not equally shared by teams in your organization. My main takeaways from this effort:

- Don’t optimize goals in silos if you want to maximize your chances of success.

- A/B testing, particularly when widely adopted within your organization, is an effective method for monitoring at scale the impact of new features or changes on the metrics you care about.

- When necessary, assign team budgets to constrain the impact by other teams.

- Don’t leave other teams helpless. Set them up for success by providing guidance and tools to overcome issues related to your metrics.

- Promote cross-team accountability in meeting your goals. Acknowledge the effort of other teams that are helping you reach your objectives.